“You’re a homosexual man twisted by drugs and surgery into a crude mockery of nature’s perfection,” the voice and face belonged to U.S. President Joe Biden on January 25th, 2023.

The video got 12,000 likes on Instagram. How many people do you think believed he really said that? AI technology is blurring the lines between what’s real and what’s fake, and it’s no longer merely for entertainment.

Are we moving towards some kind of 1984 dystopia where AI misinformation is used for propaganda, and democracies are in danger?

The reality is it’s already here. Be courageous and read on to understand the enormous threat fake news poses and how blockchain technology may have solutions to quieten its ugly voice.

If you’d like to read more on how AI and blockchain work together to make a difference in the world, hop over to the Onchain report on AI & Blockchain Disruption.

What is fake news – and how can it throw the world into the abyss?

AI misinformation is a hot topic. But fake news has been used to reach (unorthodox) objectives and as a powerful propaganda tool for a long time. It’s not a new trick in anyone’s book.

Let’s go back in time to get a sense of what reality is worth.

Two examples of fake news impact

1. Drinking children’s blood

On Easter Sunday 1475 in Trent, Italy, the Jewish community was blamed for the murder of a 2.5-year-old boy. They allegedly drank his blood to celebrate Passover. In response the Prince-Bishop of Trent, Johannes IV Hinderbach, ordered to arrest and torture the city’s Jewish community. Fifteen were executed—the majority burned alive.

2. The Great Moon Hoax

On August 25, 1835, the New York newspaper, The Sun brought earth to the moon. A series of six articles fascinated readers with man-bats who build sapphire temples with roofs of gold on the moon.

The British author, Richard Adam Locke, reportedly never believed that people would take his satire for real. But they did. The positive impact for the paper was that sales shot up.

Conclusion: Fake news is deadly for some and profitable for others.

Fake news as a political tool

While stories of child-murdering Jews and other smears have long been exposed as fake, they instill doubt and suspicion in people’s minds and keep coming back to life.

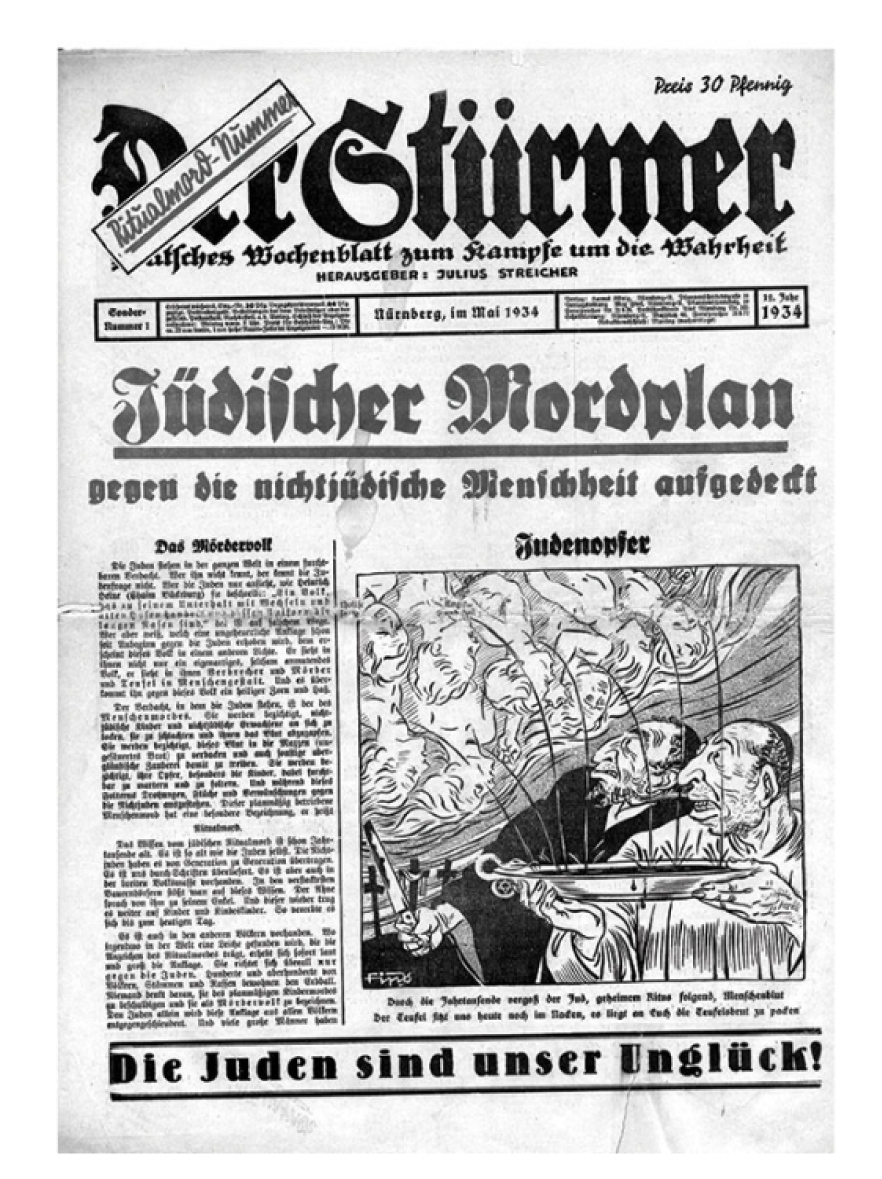

Der Stürmer was a German weekly newspaper published during the Nazi era. In 1934, 459 years after the events in Trent, the paper picked up the anti-Semitic blood libel and crafted many more fake stories.

Accordingly, the New York Times labeled Hitler pioneer of fake news who effectively turned disinformation into a propaganda tool. Without fake news 70–85 million people might not have perished during World War II.

Nearly 100 years later, we have more powerful tools than the fake news pioneer. The Nazi propaganda machine in 1934 was regional; AI fake news in 2024 go global. Print media, newspapers, and analog films gave way to digital media, which spreads information in milliseconds before anyone can say “but….”.

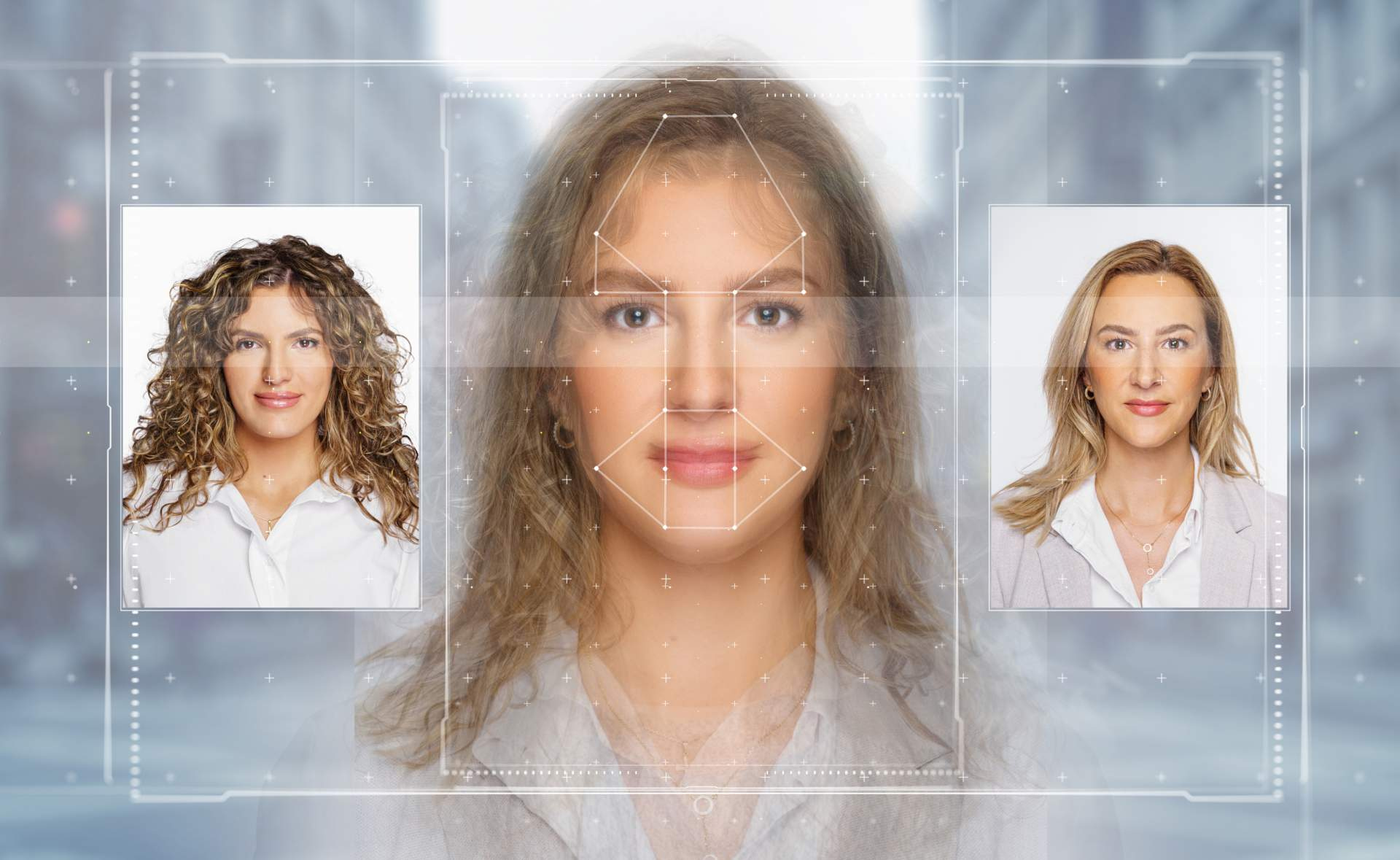

AI technology is so sophisticated that digital media fakes are indistinguishable from real-world records. Unlike the Metaverse, deepfakes can actually create believable fake realities.

AI and fake news – when deep learning creates convincing illusions

What are deepfakes?

Deepfakes are hyper-realistic digital content creations. They typically use existing content and alter it for videos, images, social media posts, news articles, and entire websites that look legitimate. Traditional fact-checking mechanisms are overwhelmed by the sophistication and scale of the phenomenon. When they replicate public figures, things can get messy.

Those who were reluctant to put their trust in AI see some of their worst predictions come true unless the monster is tamed.

The deep-fake ‘monster’ in action

In 2022, AI “was utilized in at least 16 countries to sow doubt, smear opponents, or influence public debate,” Freedom House, a human rights advocacy group, reports. This includes impersonating real people.

Six years earlier, disinformation played a significant role in the 2016 U.S. presidential election and the Brexit referendum, as we later learned. In Myanmar, disinformation promoted through Facebook algorithms contributed to a genocide.

An astonishing example of how believable deepfakes are came in June ’22, when Franziska Giffey, mayor of Berlin, held a video conversation with Vitali Klitschko, mayor of Kyiv. Only the Kyiv mayor never participated and had no idea the video conversation ever happened.

Giffey remained confident she was speaking to the real person for 15 minutes before suspicion arose. Eventually, the connection broke down.

In this case it was easy to verify that the call was hijacked by AI. But when faces of politicians are inserted into locations, the receiving social media consumer may never even ask whether it’s real.

The bigger picture reveals an even more daunting threat. Disinformation increases social and political divisions. As trust declines, people retreat into echo chambers. They only trust information that confirms their pre-existing beliefs. The circle closes as this further widens the social and political gaps, causing democracy to crumble.

The Web’s dilemma with truthfulness

Unfortunately, while the Web is free, it doesn’t function according to democratic principles. Web2 is more aligned with Darwin’s survival of the fittest. Or shall we say, “the winner takes it all”?

The business models of big digital platforms such as Google, Facebook, and X are based on advertising and the need to continuously increase engagement. There’s little incentive to inhibit fake news distribution. The question of content authenticity and credibility is secondary.

How far advertisers can take their creativity to provoke the desired reaction varies from platform to platform. Each has its own view on content moderation vs. free speech, ethnic boundaries, and fact-checking mechanisms.

Authenticity and truth are often the first victims when the competition for attention is strong, and greed takes over.

AI fake news detection remains a serious challenge

Now that we’ve shown you the very dark side of AI-generated content, it’s time to ask what to do about it. The truth is, there’s currently a lack of efficient methods to fight or even detect fake news.

NLP (natural language processing) tools can analyze writing style, check facts, and assess the credibility of sources. However, these AI tools are still not mature enough to understand the nuances and context of news. They are also susceptible to false positives, meaning they can tag some genuine news as fake.

Additionally, AI tools remain only probabilistic. Truth detection can not be guaranteed. In other words, we’re basically back to receiving inaccurate, biased information.

The solution could be a blockchain-based network of news sources. The digital ecosystem would authenticate a news source and follow it wherever it’s quoted or shared.

Blockchain can function as a ‘truth machine’ based on its transparency, immutability, and decentralization, which make it a tool for verifying the authenticity of digital content.

How blockchain helps AI detect fake news

To ensure the authenticity of content, it is necessary to trace it back to its original source. Does it come from a known, credible source, an authorized news channel at the relevant location, or a random individual on the internet? With blockchain technology, it is possible to track the distribution of digital content and create a clear audit trail.

Some pioneering systems are already operative. In January 2024, the media giant Fox Corp. launched the content verification tool Verify. The open-source app built on the Polygon blockchain allows content creators to register, license, and track the use of their content.

Content consumers can easily identify content from trusted sources with this type of tool. After creating and publishing a piece of content in their own system, creators upload it to Verify. There the content receives a unique identifier. The content is available via API to licensees through smart contracts.

The Arweave team took a slightly different approach with its activity. Together with the provenance layer Irys (former BundIr) they developed the Digital Content Provenance Record (DCPR).

DCPR is a standard algorithm that helps in generating verifiable records, timestamps digital algorithms. It can be plugged into any blockchain. According to the website it “provides a provably neutral ledger for recording and safeguarding legitimacy of digital information.”

Using blockchain-based attestations for each piece of content allows for verification of authenticity. The cryptographic hash created when the creator signs the digital content makes it immutable. Creators use their private key, which users can verify using the creator’s public key.

Adding timestamps indicating when the content was originally created and published can provide another layer of credibility. It places each activity related to the content piece in chronological order. Deep fakes that need real content to learn from have it harder to pose as original.

By integrating blockchain-based attestations into feedback systems, users could also identify unauthorized alterations and report them. Organizations could build a decentralized trust and accountability system.

And there’s more. The same principle can be applied to content creators themselves. Using platforms like uPort or Sovrin, journalists, reporters, or anyone providing digital news content can link it to their identity. This enables users to verify the creator’s identity and ensures they are human.

To take this a step further, creators can receive credentials from trusted organizations that bear witness of their expertise or authority. Adding these to the content adds even more assurance to news consumers that the information they receive is credible.

The most far-reaching possibility is to tokenize digital content. Creators can turn important content pieces into NFTs, acting as unique copies. This not only helps combat fakes but also increases creators’ credibility. In addition, they can sell NFT copies and earn money.

Blockchain and fake news – exposing the truth

AI-generated fake news is not to be taken lightly. The phenomenon poses serious threats to individuals, institutions, and societies. Blockchain can not prevent people (or organizations) from creating fake news and deep fakes with the help of AI. But it can expose them and identify authentic information.

The threat is equally acute for content creators, journalists, videographers, advertisers, influencers, news outlets, and, in short, anyone posting on the internet. In the age of AI, no content is immune to malicious altering.

To make blockchain tools effective, the technology needs to be implemented much more widely. Only when verification mechanisms become standard in the media can we ensure that the news we consume is credible – or at least comes from credible sources.

This is one area where integrating blockchain with AI technologies can make a meaningful difference. Discover additional areas and use cases of AI and blockchain synergies impacting the way we handle data. Read the Onchain research report on AI x blockchain disruption.

Dr. Ananya Shrivastava was a major contributor to this article. This article was co-authored by Sven Kamieth and Ruth M. Trucks.